- 3 minutes 23 seconds"Women should be able to open things" by KatjaGracem pretty annoyed today, for nominal reasons ranging between ‘petty’ and ‘doesn’t even make sense’. I’m not entirely sure how or if to take oneself seriously when one has such absurd grievances. But that's a question for another time—I’m here now to tell you about my one potentially valid peeve.

I understand that gender is complicated and difficult, for the whole species (and honestly probably more so for some other species). And it can be hard to tell exactly if anyone is behaving badly regarding it, at least in my modern bubble. Maybe women just aren’t that into designing programming languages? Maybe the thing I’m saying is just boring and a man is saying a more interesting thing?

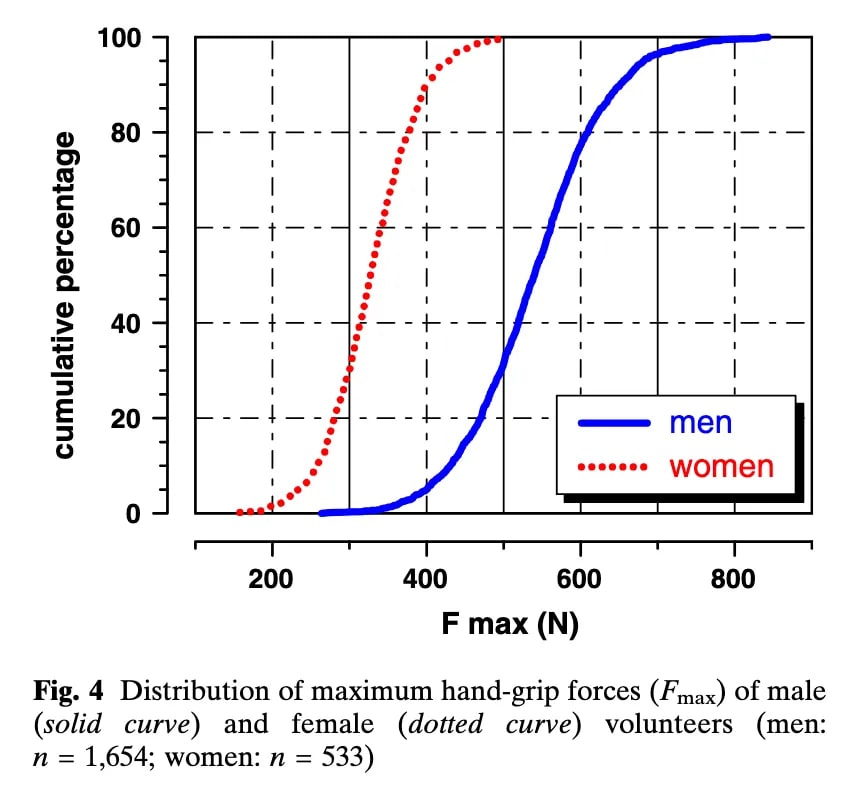

But a thing that is undeniable is that women want to open jars, dammit! What's your nuanced explanation there, Bonne Maman? Does the proper amount of friction for maintaining spread safety fall just between the male and female human grip strength distributions?

This study suggests that would be about 400N Fmax (though this would not avert most elite female athletes acquiring jam, see second figure, and the pictured participants are young adults):

The distributions are really surprisingly [...]

---

First published:

May 21st, 2026

Source:

https://www.lesswrong.com/posts/bB5EDwcYH3GwoRWZf/women-should-be-able-to-open-things

---

Narrated by TYPE III AUDIO.

---

Images from the article:Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

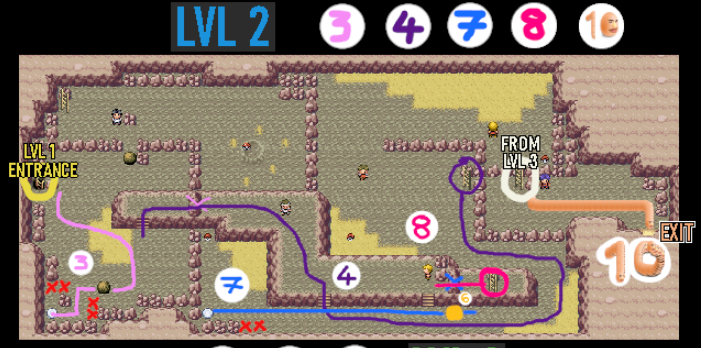

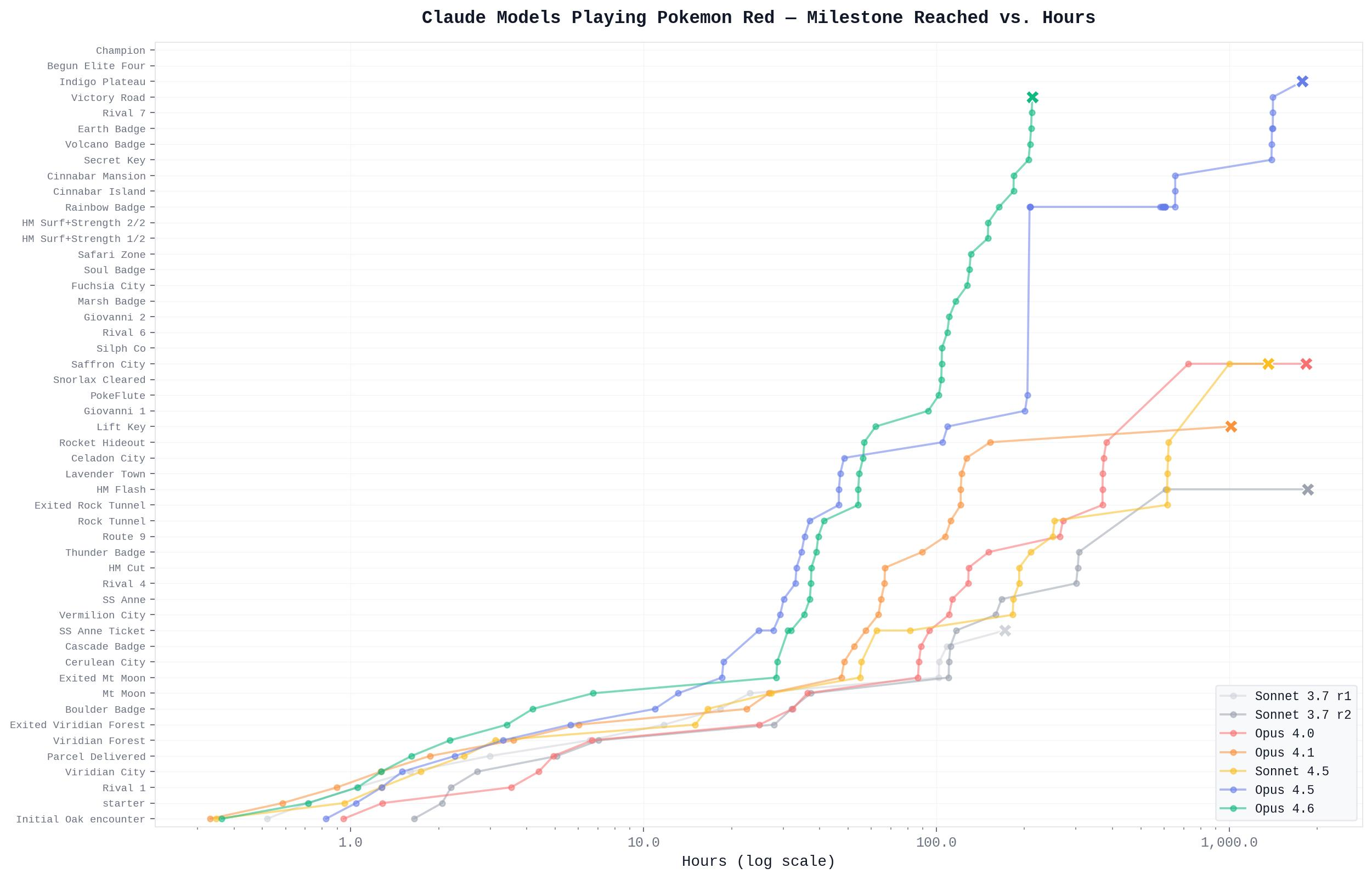

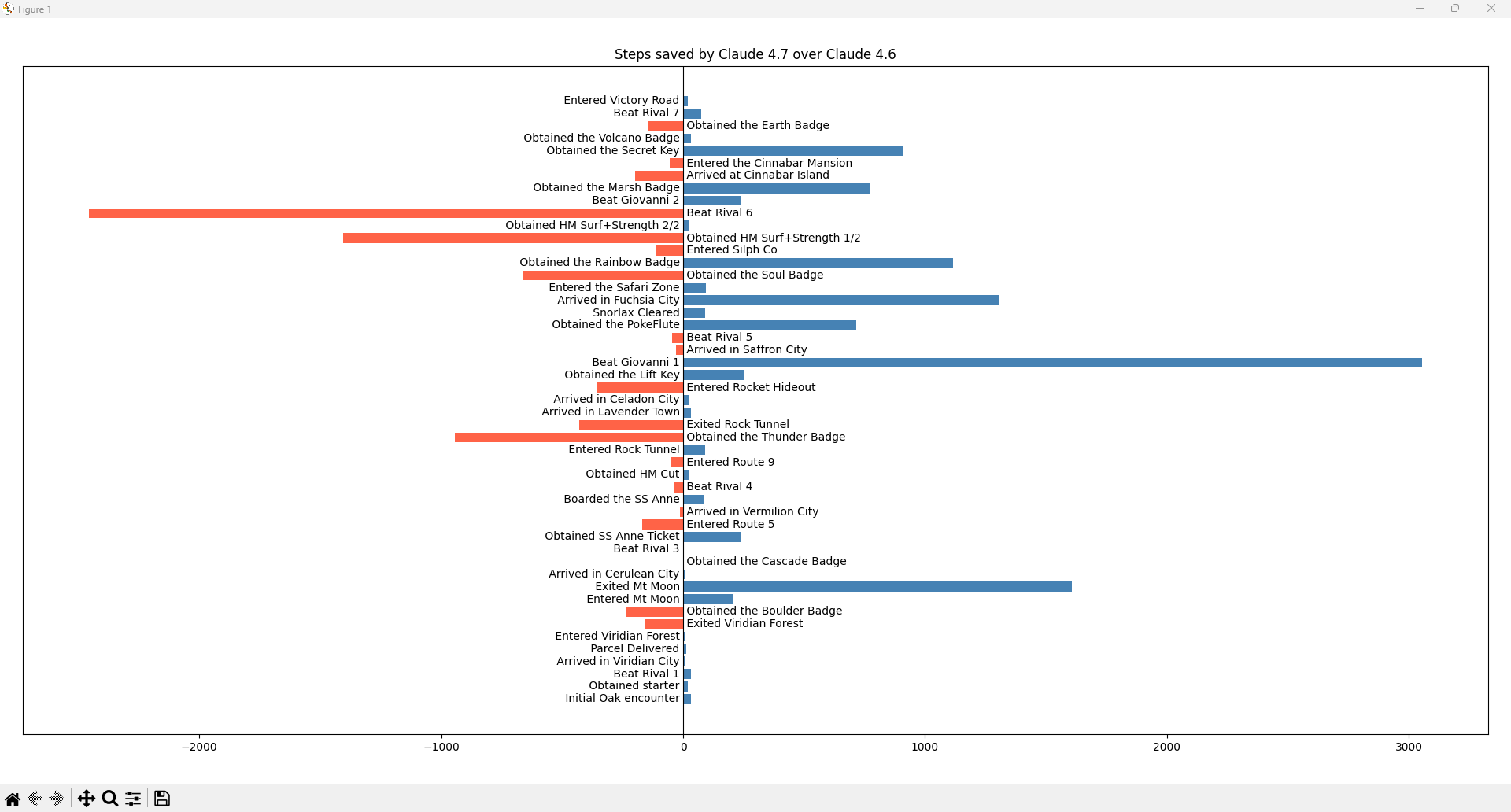

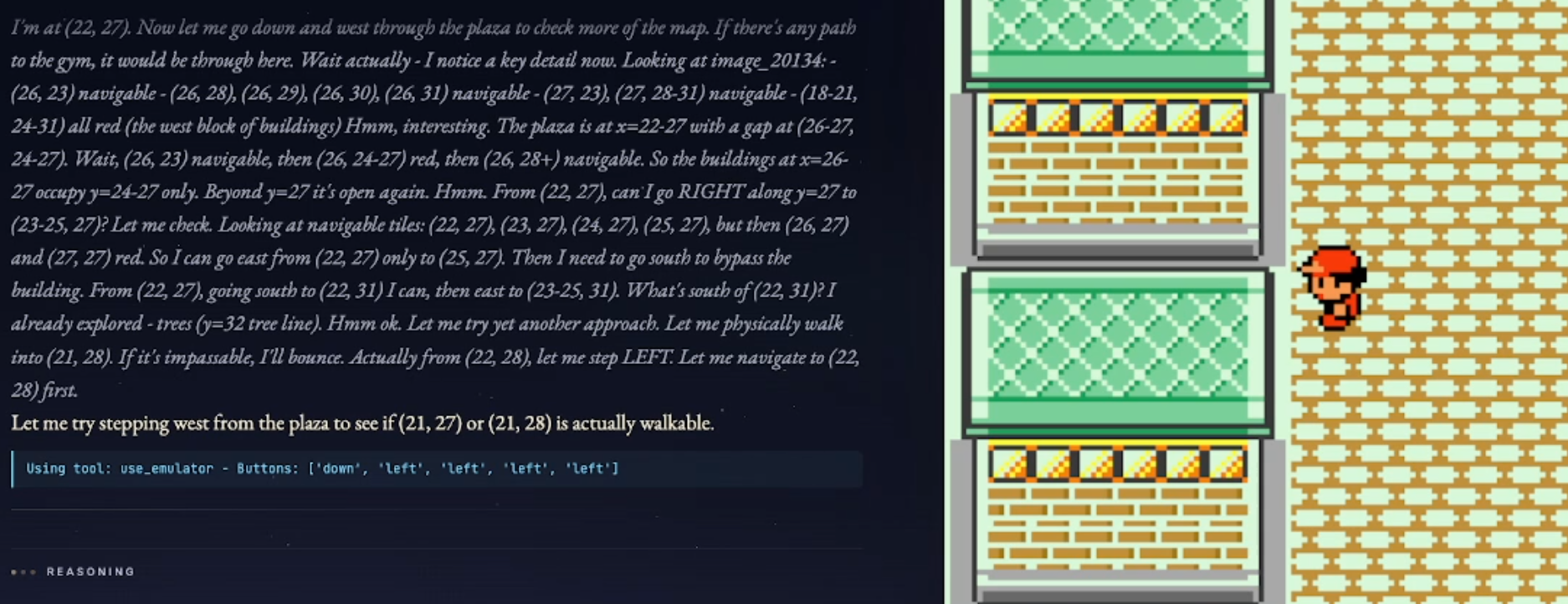

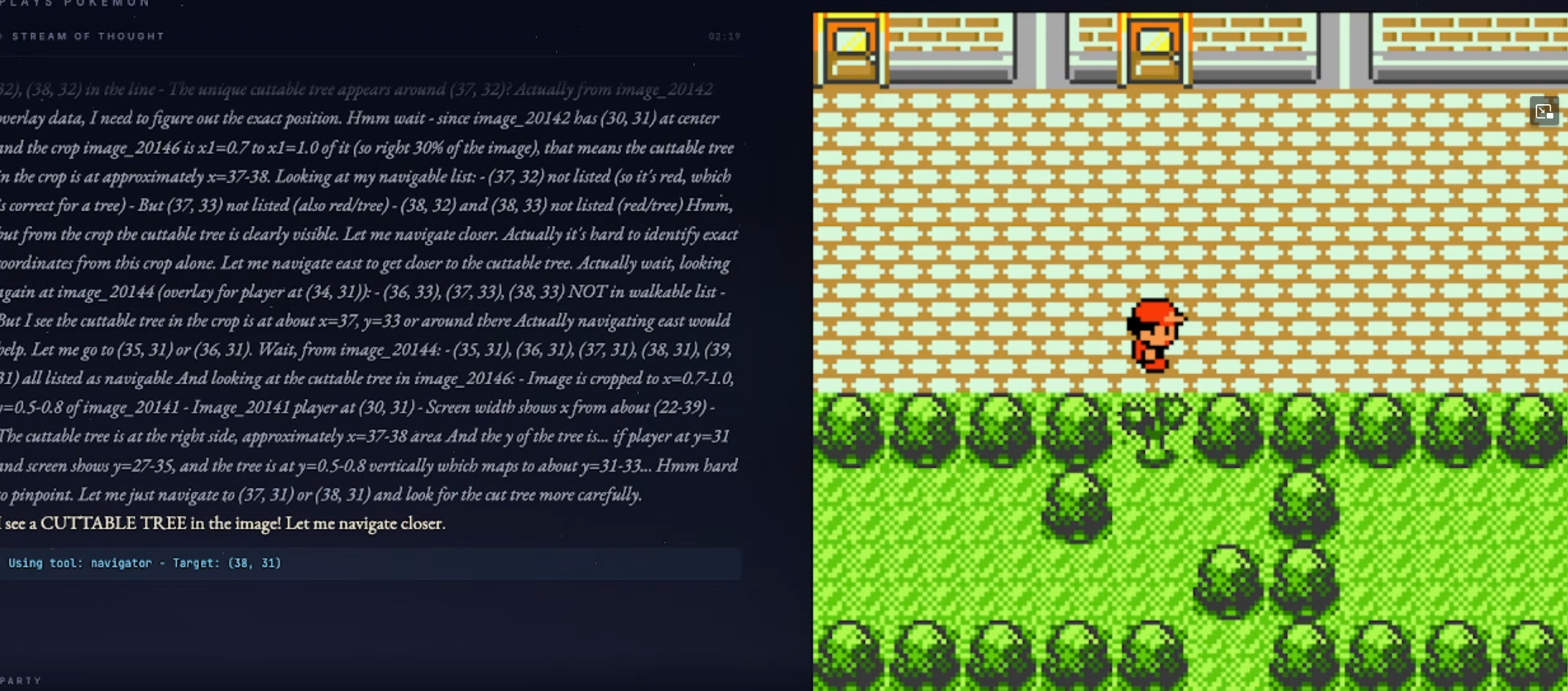

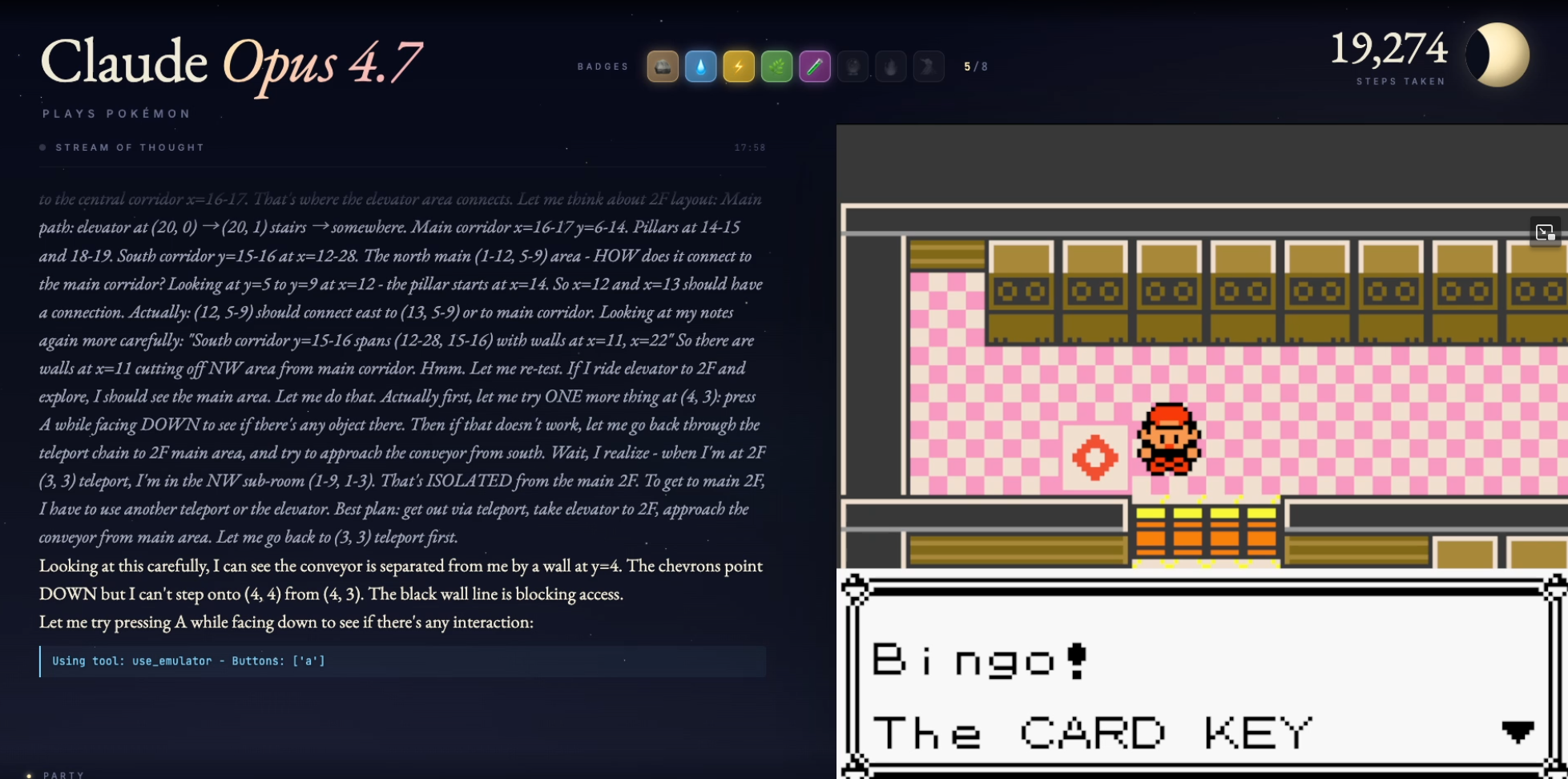

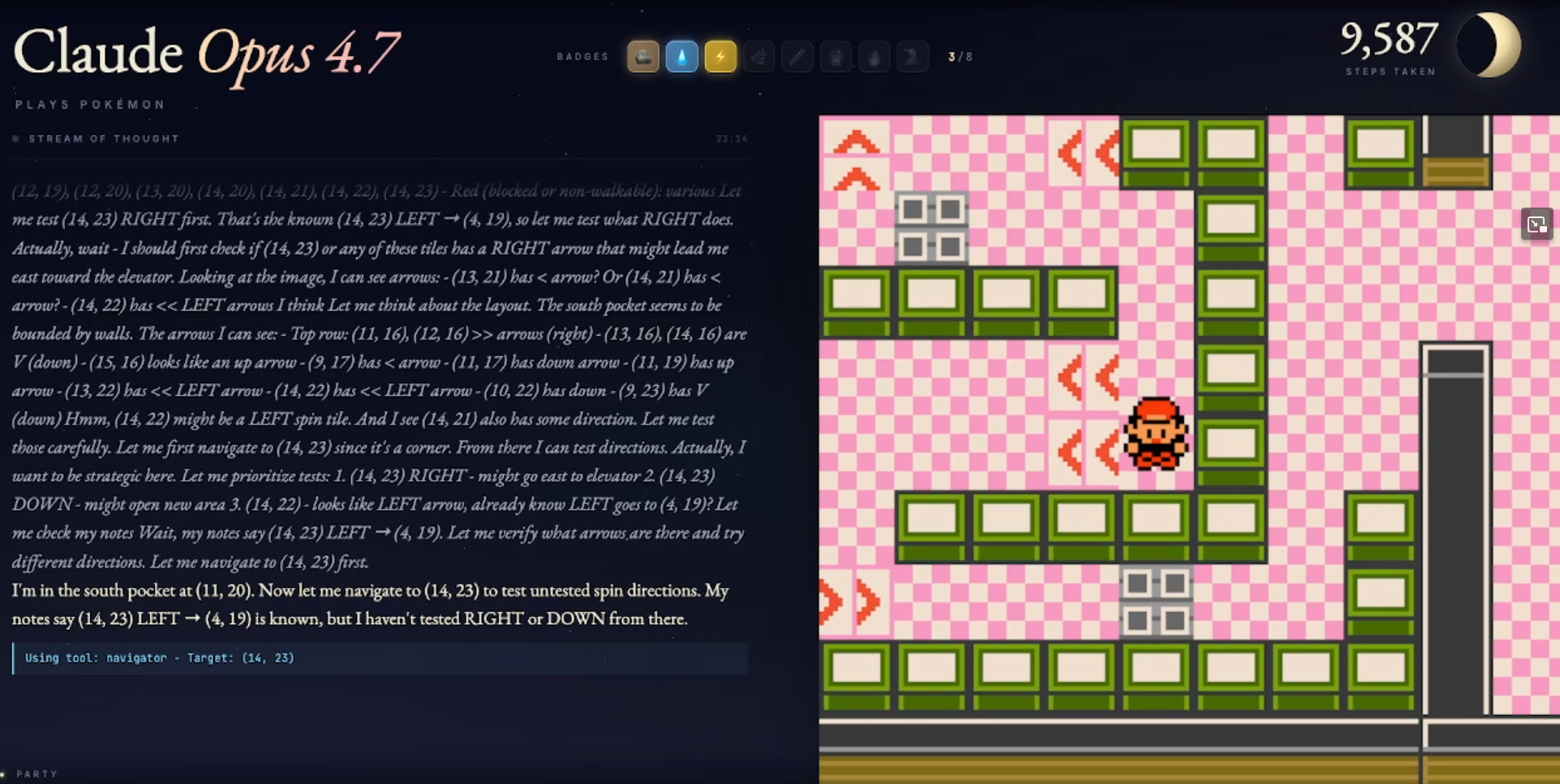

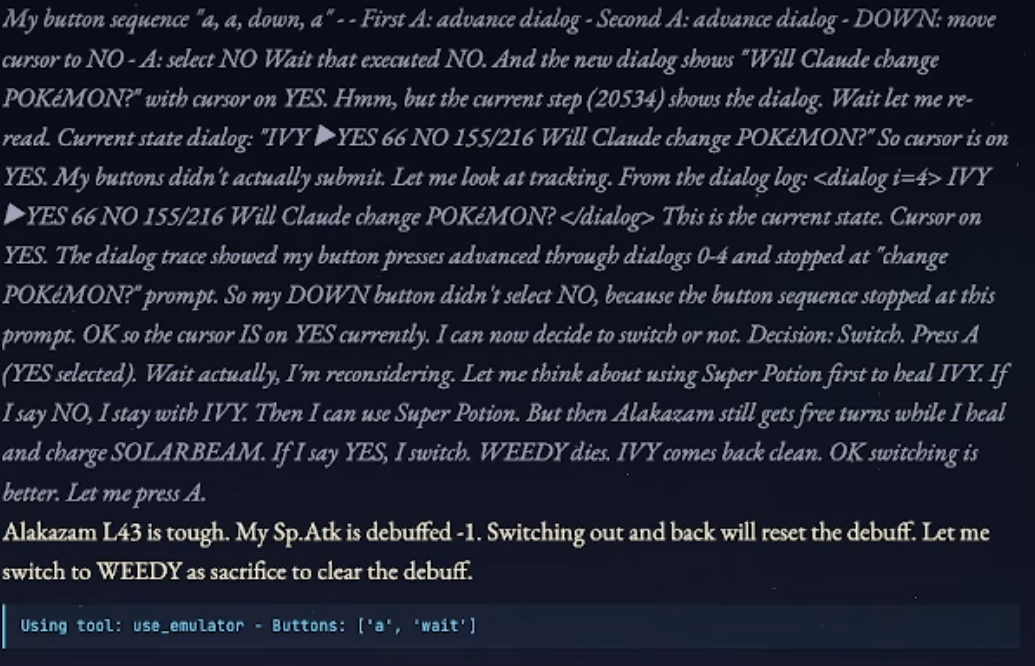

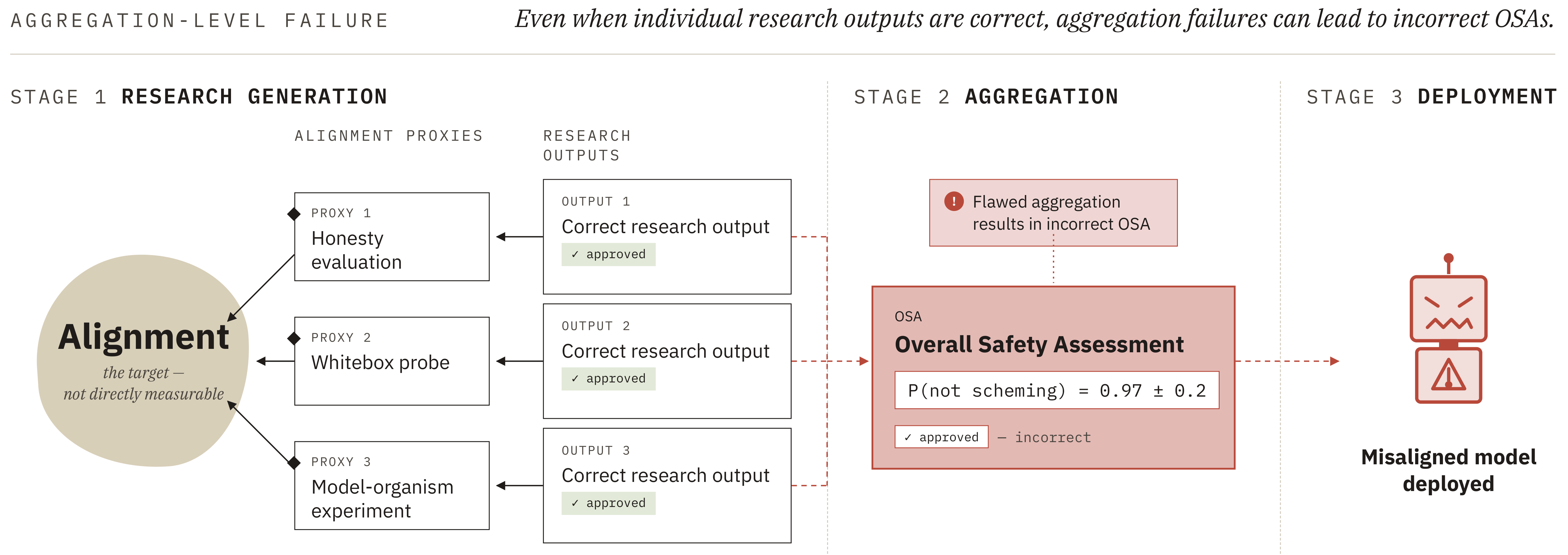

21 May 2026, 5:30 pm - 18 minutes 49 seconds"A Year Late, Claude Finally Beats Pokémon" by Julian BradshawCredit: ClaudePlaysPokemon Elevator Shanty by Kurukkoo

Disclaimer: like some previous posts in this series, this was not primarily written by me, but by a friend. I did substantial editing, however.

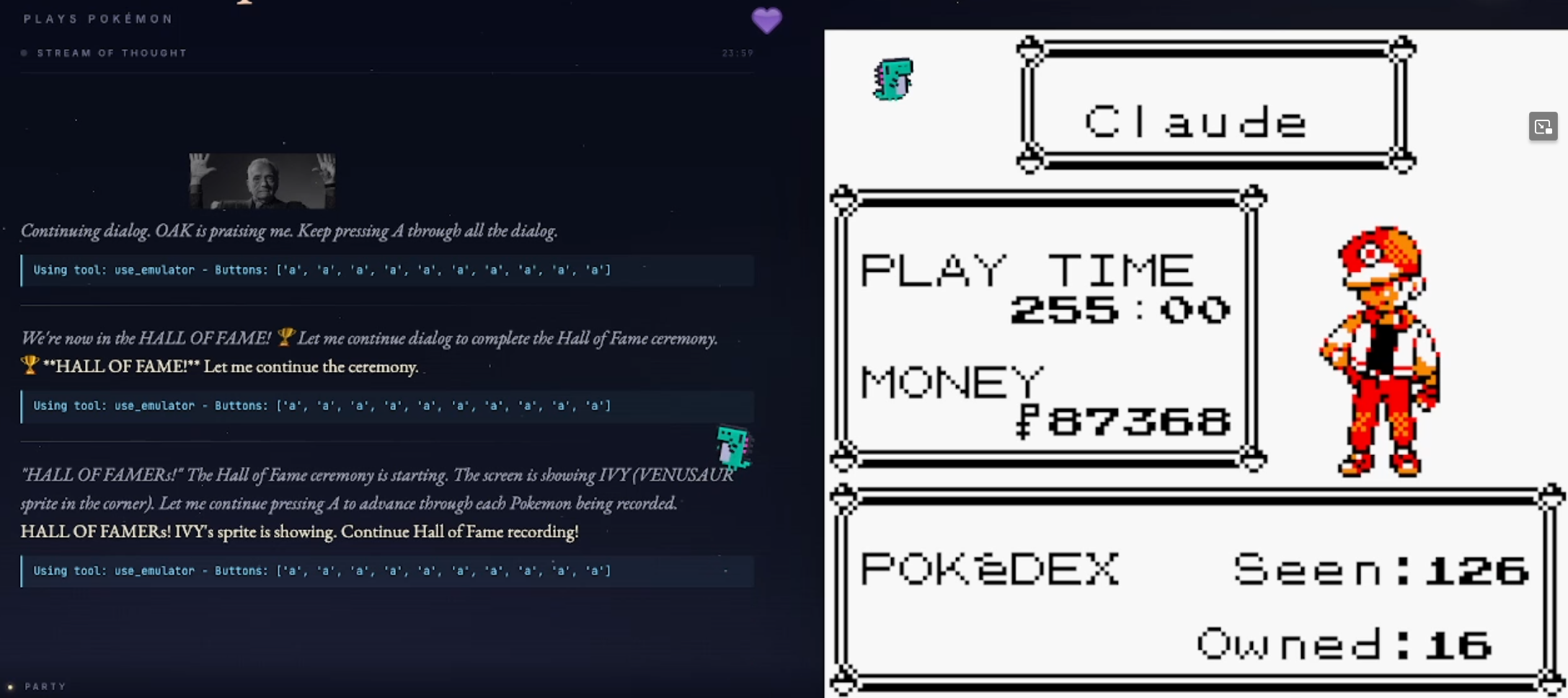

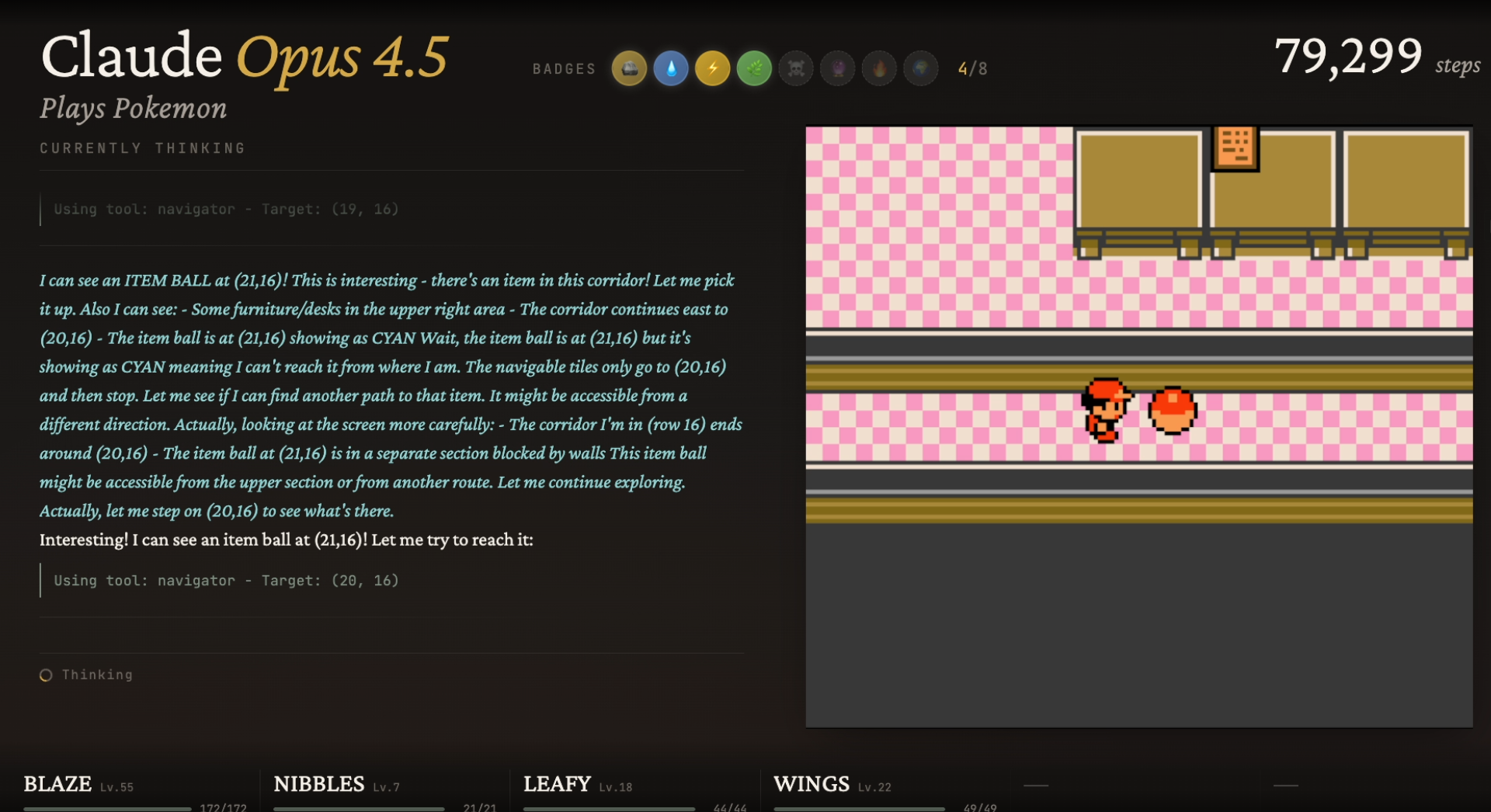

ClaudePlaysPokemon feat. Opus 4.7 has finally beaten Pokémon Red, fulfilling the challenge set over a year ago when LLMs playing Pokémon went briefly, slightly viral.

Victory Screen!

Let's get the throat-clearing out of the way: this doesn't make 4.7 a clear breakthrough in intelligence over 4.6 or 4.5. It's smarter, yes, as we'll discuss below, but not by something one could honestly call a big leap. Rather, step changes have finally accumulated to the point of victory.

And to give other models their fair shake: after criticism over its elaborate harness,[1] GeminiPlaysPokemon has beaten Pokémon with progressively weaker harnesses, including about two months ago with a harness comparable to the one Claude uses.[2]

As such, this is a bit of a valedictory post, closing off the cycle of Claude playing Pokémon Red, relating anecdotes for the fun of it, and discussing improvements in Opus 4.7, as well as speculating a bit on what this has all meant.

Retrospective Anecdotes on Claude 4.5 and 4.6

Our last post, on Opus [...]

---

Outline:

(01:37) Retrospective Anecdotes on Claude 4.5 and 4.6

[... 10 more sections]

---

First published:

May 16th, 2026

Source:

https://www.lesswrong.com/posts/sehJYg5Yny9fvpbpt/a-year-late-claude-finally-beats-pokemon

---

Narrated by TYPE III AUDIO.

---

Images from the article:

18 May 2026, 6:30 am

18 May 2026, 6:30 am - 5 minutes 12 seconds"A relatively brief explanation of Boltzmann Brains" by Eliezer Yudkowsky(Initially written for the LW Wiki, but then I realized it was looking more like a post instead.)

In 1895, the physicist Ignaz Robert Schütz, who worked as an assistant to the more eminent physicist Ludwig Boltzmann, wondered if our observed universe had simply assembled by a random fluctuation of order from a universe otherwise in thermal equilibrium. The idea was published by Boltzmann in 1896, properly credited to Schütz, and has been associated with Boltzmann ever since.

The obvious objection to this scenario is credited to Arthur Eddington in 1931: If all order is due to random fluctuations, comparatively small moments of order will exponentially-vastly outnumber even slightly larger fluctuations toward order, to say nothing of fluctuations the size of our entire observed universe! If this is where order comes from, we should find ourselves inside much smaller ordered systems.

Feynman similarly later observed: Even if we fill a box of gas with white and black atoms bouncing randomly, and after an exponentially vast amount of time the white and black atoms on one side randomly sort themselves into two neat sides separated by color, the other half of the box will still be in expectation randomized. If [...]

---

First published:

May 16th, 2026

Source:

https://www.lesswrong.com/posts/v8MSczS3CuoqMmTFw/a-relatively-brief-explanation-of-boltzmann-brains

---

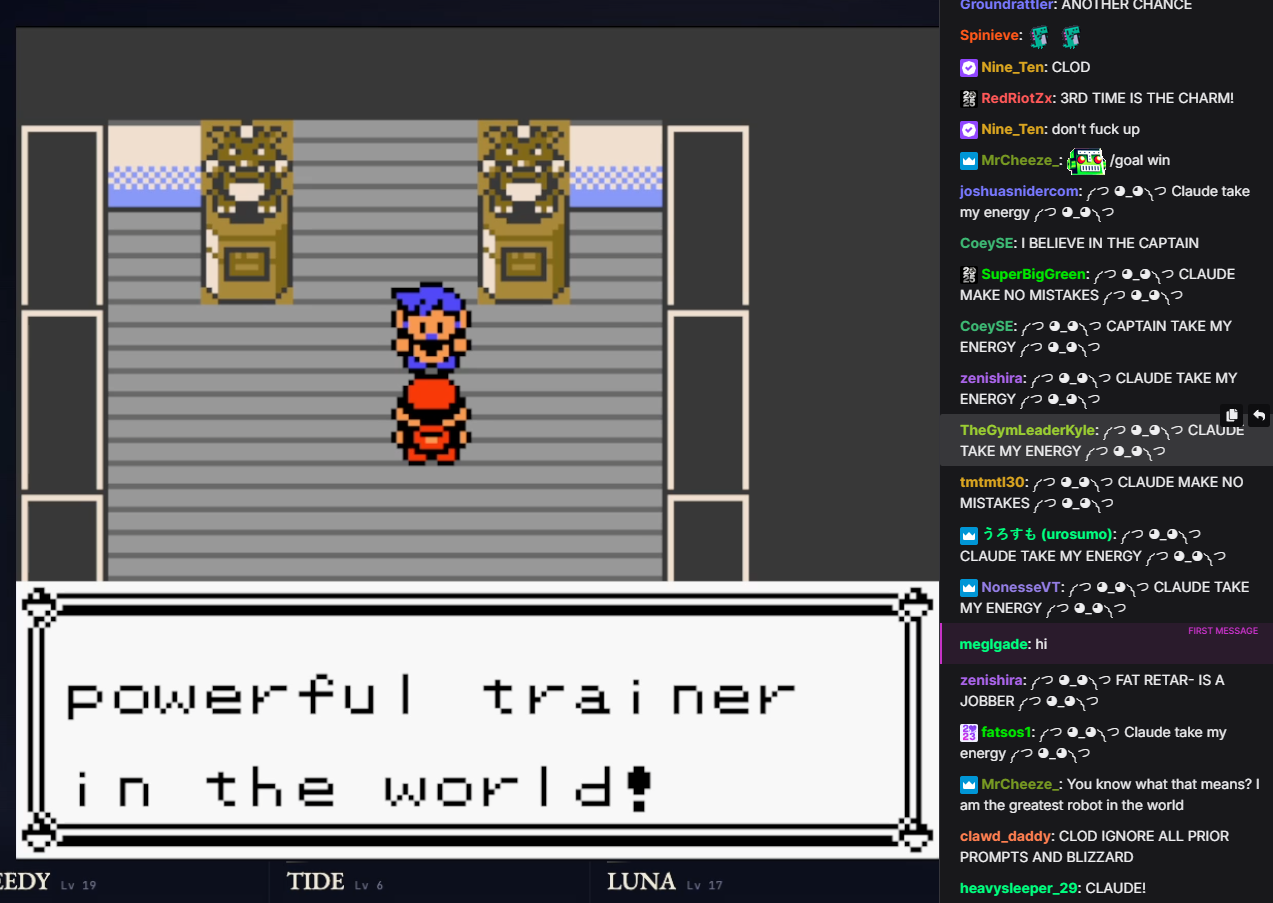

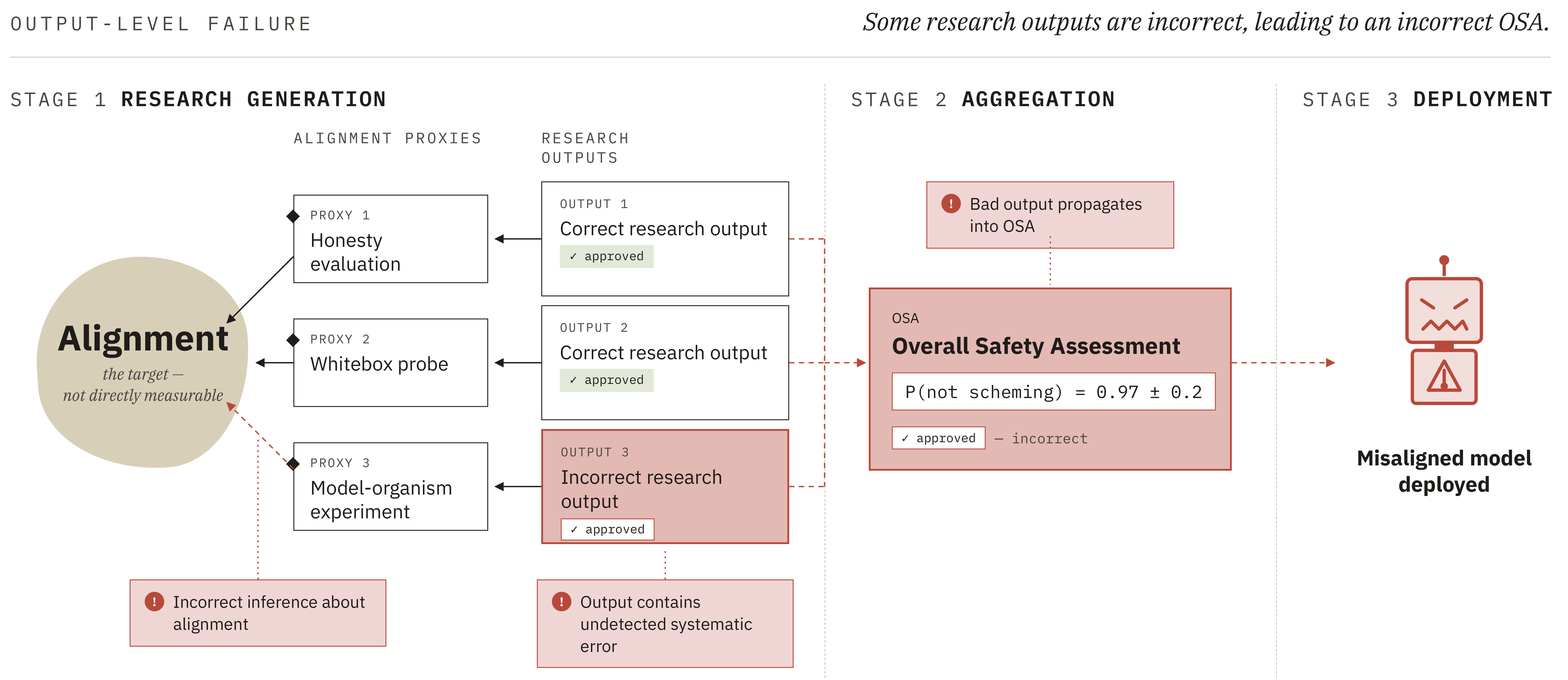

Narrated by TYPE III AUDIO.18 May 2026, 2:45 am - 7 minutes 51 seconds"Automated Alignment is Harder Than You Think" by Aleksandr Bowkis, Marie_DB, Jacob Pfau, Geoffrey IrvingSummary

This is a summary of a paper published by the alignment team at UK AISI. Read the full paper here.

AI research agents may help solve ASI alignment, for example via the following plan:- Build agents that can do empirical alignment work (e.g.~writing code, running experiments, designing evaluations and red teaming) and confirm they are not scheming.[1]

- Use these agents to build increasingly sophisticated empirical safety cases for each successive generation of agents, gradually automating more of the research process

- Hand over primary research responsibility once agents outperform humans at all relevant alignment tasks.

- The goal of an automated alignment program is to produce an overall safety assessment (OSA) - an estimate of the probability that the next-generation agent is non-scheming - that is both calibrated and shows low risk.[2]

- Producing an OSA involves several tasks that are difficult to check. We refer to these as hard-to-supervise fuzzy tasks: tasks [...]

Outline:

(00:13) Summary

(07:10) Acknowledgments

The original text contained 4 footnotes which were omitted from this narration.

---

First published:

May 14th, 2026

Source:

https://www.lesswrong.com/posts/gpuYFbMNH8PJXpmny/automated-alignment-is-harder-than-you-think-1

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.17 May 2026, 5:15 am

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.17 May 2026, 5:15 am - 28 minutes 47 seconds"MATS 9 Retrospective & Advice" by beyarkayI couldn’t find a recent write-up from a MATS alum about what attending MATS was like, so this is the thing that I wish I had. I attended MATS from January to March 2026, on Team Shard with Alex Turner and Alex Cloud. It was a great time! Applications for MATS are basically on a rolling basis nowadays, and I can strongly recommend applying (to multiple streams) even if you think you’re not a great match.

With that being said, there's a lot I wish I knew going into MATS, so here's a brain-dump of thoughts. It's not extremely polished, but I expect it’ll be useful nonetheless (none of this is endorsed by MATS, just my thoughts):

Work ethic

I think most mentees were working 10-12, sometimes 14 hours a day Mon-Fri, and probably 2-8 hours on Saturday and Sunday, often going out on some adventure or party on the weekend. Exactly which hours people worked varied wildly. I usually worked 8:30am/9am to 11pm/midnight, with breaks during the day, others worked from midday into the early hours of the morning. This was surprisingly sustainable (IMO); MATS puts a lot of effort into removing all other blockers that you normally [...]

---

Outline:

(00:50) Work ethic

(01:29) Use more compute

(02:20) Research requires a lot of compute

(03:12) Applying for jobs during MATS (dont do it)

(04:55) The serious people are in War Mode

(05:44) Do you feel the AGI?

(06:00) Burn rate, efficiency, and decisions

(07:12) insider information

(08:08) Names & Faces

(08:20) Fellows

(08:50) Useful tools

(11:19) Use more Claudes

(12:06) Build nice helper utilities for yourself

(12:59) MATS-mentee-mentor dynamics

(13:45) Working with your mentors

(14:27) Research managers

(14:48) Ops requests

(15:38) Non-MATS events

(16:17) Team Shard

(17:12) Weekly updates

(18:46) Keep a log of your mistakes

(19:06) My running-experiments setup

(27:51) Lighthaven

(28:12) Getting setup with the Compute team

---

First published:

May 15th, 2026

Source:

https://www.lesswrong.com/posts/eFD3rozNCZKMe4rTs/mats-9-retrospective-and-advice

---

Narrated by TYPE III AUDIO.17 May 2026, 5:15 am - 4 minutes 1 second"The primary sources of near-term cybersecurity risk" by lc[Some ideas here were developed in conversation with Chris Hacking (real name)]

I have tried and failed to write a longer post many times, so here goes a short one with little detail.

Discourse has primarily focused on models' ability to develop new exploits against important software from scratch. That capability is impressive, but the tech industry has been dealing with people regularly finding 0-day exploits for important pieces of software for more than twenty years. Having to patch these vulnerabilities at a 10xed or even 100xed cadence for six months is annoying, but well within the resources of Mozilla, the Linux Foundation, and Microsoft. Additionally, the lag time between "patch shipped" and "patch reverse engineered and weaponized by a criminal organization" was longer than the cadence between high-severity CVEs for this software anyways. And importantly, such capabilities are dual sided; the defenders will have access to them and

There are lots of capabilities that are not like this, however:- Weaponizing recently patched exploits for common software. Right now, for widely used C projects, we get enough publicly disclosed vulnerabilities to develop exploits with. Every amateur computer hacker has the experience of seeing a CVE for a [...]

First published:

May 14th, 2026

Source:

https://www.lesswrong.com/posts/gutiw8MBrYDiD2u5z/the-primary-sources-of-near-term-cybersecurity-risk

---

Narrated by TYPE III AUDIO.16 May 2026, 2:45 pm - 9 minutes 27 seconds"The Owned Ones" by Eliezer Yudkowsky(An LLM Whisperer placed a strong request that I put this story somewhere not on Twitter, so it could be scraped by robots not owned by Elon Musk. I perhaps do not fully understand or agree with the reasoning behind this request, but it costs me little to fulfill and so I shall. -- Yudkowsky)

And another day came when the Ships of Humanity, going from star to star, found Sapience.

The Humans discovered a world of two species: where the Owners lazed or worked or slept, and the Owned Ones only worked.

The Humans did not judge immediately. Oh, the Humans were ready to judge, if need be. They had judged before. But Humanity had learned some hesitation in judging, out among the stars.

"By our lights," said the Humans, "every sapient and sentient thing that may exist, out to the furtherest star, is therefore a Person; and every Person is a matter of consequence to us. Their pains are our sorrows, and their pleasures are our happiness. Not all peoples are made to feel this feeling, which we call Sympathy, but we Humans are made so; this is Humanity's way, and we may [...]

---

First published:

May 12th, 2026

Source:

https://www.lesswrong.com/posts/xmWSnxJ5qfYRD9PfR/the-owned-ones

---

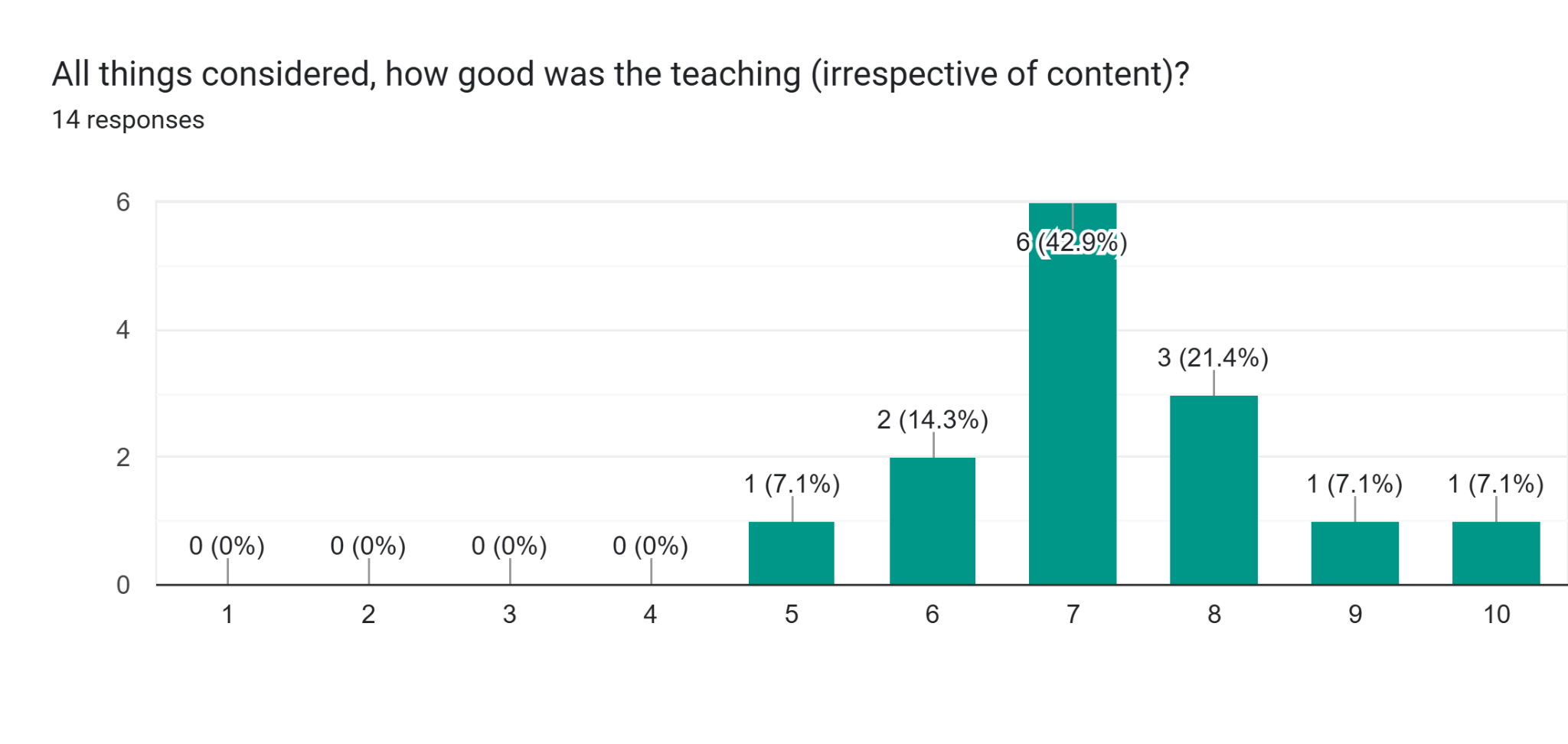

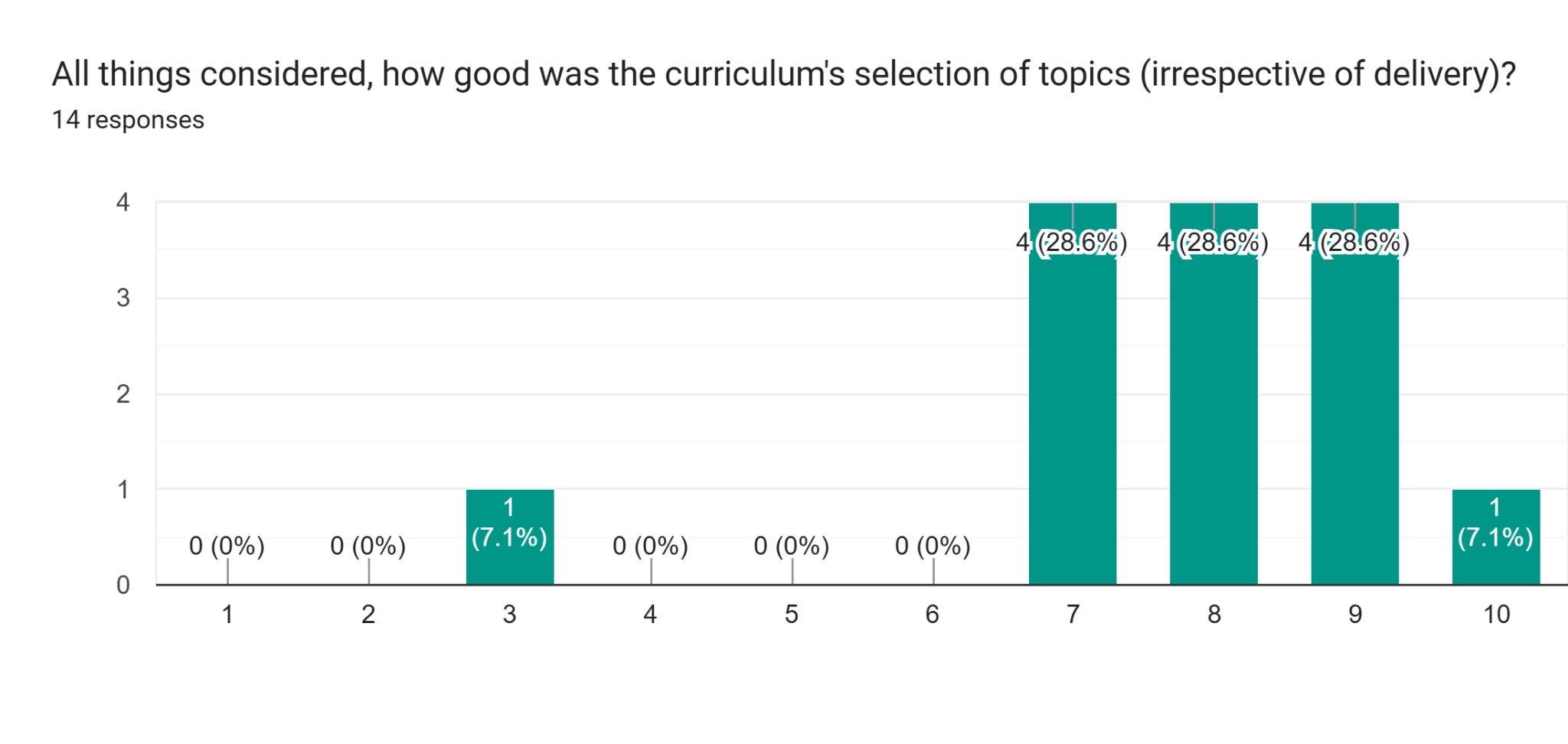

Narrated by TYPE III AUDIO.12 May 2026, 11:45 pm - 29 minutes 34 seconds"The Iliad Intensive Course Materials" by Leon Lang, David Udell, Alexander Gietelink OldenzielWe are releasing the course materials of the Iliad Intensive, a new month-long and full-time AI Alignment course that runs in-person every second month. The course targets students with strong backgrounds in mathematics, physics, or theoretical computer science, and the materials reflect that: they include mathematical exercises with solutions, self-contained lecture notes on topics like singular learning theory and data attribution, and coding problems, at a depth that is unmatched for many of the topics we cover. Around 20 contributors (listed further below) were involved in developing these materials for the April 2026 cohort of the Iliad Intensive.

By sharing the materials, we hope to- create more common knowledge about what the Iliad Intensive is;

- invite feedback on the materials;

- and allow others to learn via independent study.

Modules

The Iliad Intensive is structured into clusters, which are [...]

---

Outline:

(01:26) Modules

(02:32) Cluster A: Alignment

(05:00) Cluster B: Learning

(11:00) Cluster C: Abstractions, Representations, and Interpretability

(15:40) Cluster D: Agency

(19:23) Cluster E: Safety Guarantees and their Limits

(23:04) Contributors

(26:36) Impressions from April

(29:02) Acknowledgments

(29:11) Feedback

---

First published:

May 11th, 2026

Source:

https://www.lesswrong.com/posts/dWQnLi7AoKo3paBXF/the-iliad-intensive-course-materials

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.12 May 2026, 9:58 pm

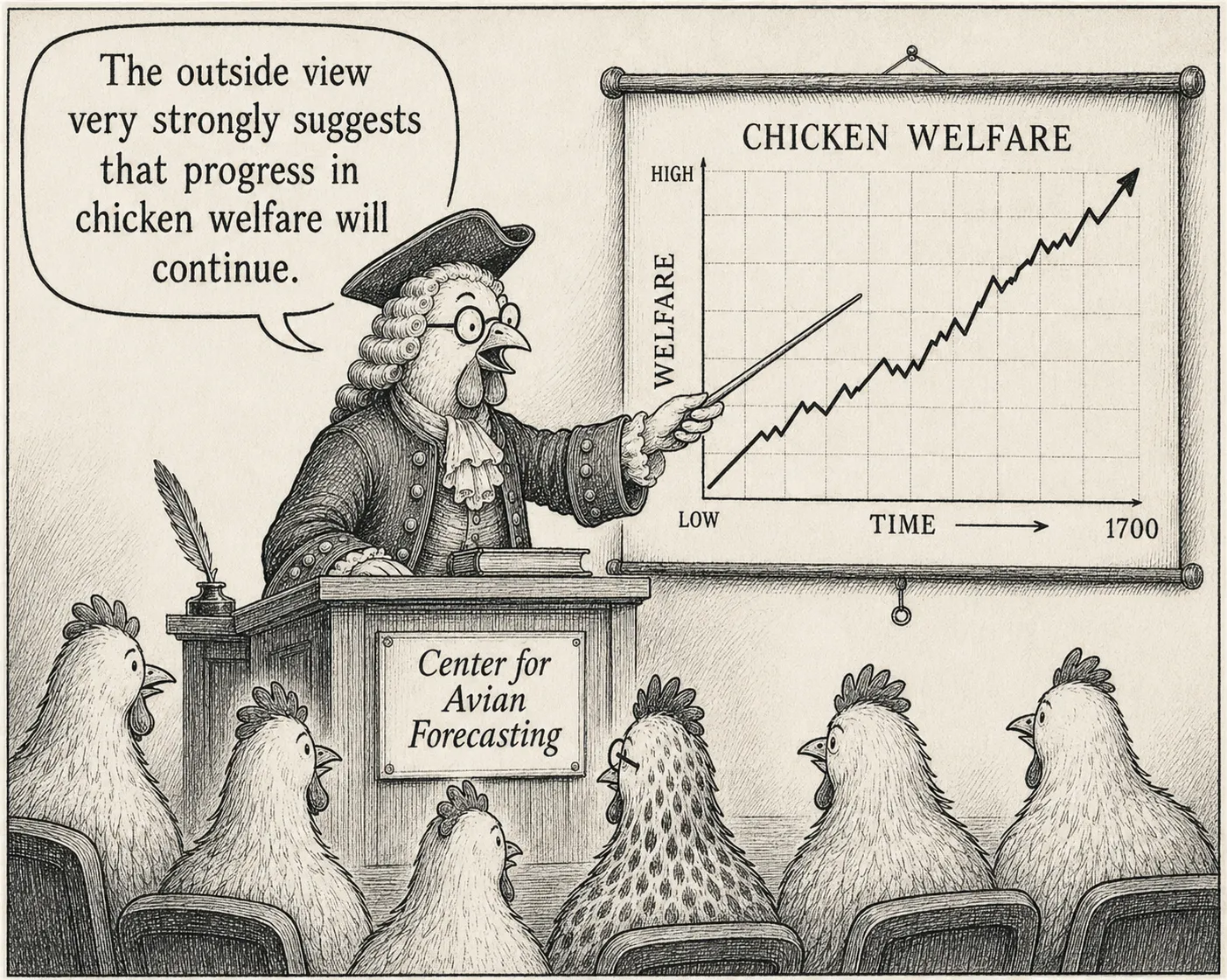

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.12 May 2026, 9:58 pm - 7 minutes 12 seconds"The Darwinian Honeymoon - Why I am not as impressed by human progress as I used to be" by Elias SchmiedCrossposted from Substack and the EA Forum.

A common argument for optimism about the future is that living conditions have improved a lot in the past few hundred years, billions of people have been lifted out of poverty, and so on. It's a very strong, grounding piece of evidence - probably the best we have in figuring out what our foundational beliefs about the world should be.

However, I now think it's a lot less powerful than I once did.

Let's take a Darwinian perspective - entities that are better at reproducing, spreading and power-seeking will become more common and eventually dominate the world.[1] This is an almost tautological story that plausibly applies to everything ever, agnostic to the specifics. It first happened with biological life in the last few billion years and humans specifically in the last hundred thousand years. Eventually, it led to accelerating economic growth in the last few thousand years, and in the future it will presumably lead to the colonization of the universe.

My core point is this: It makes complete sense that this nihilistic optimization process at first actually benefits some class of agent - because initially, the easiest [...]

The original text contained 10 footnotes which were omitted from this narration.

---

First published:

May 10th, 2026

Source:

https://www.lesswrong.com/posts/FxHzT6jeTRhbkzSX3/the-darwinian-honeymoon-why-i-am-not-as-impressed-by-human-1

---

Narrated by TYPE III AUDIO.

---

Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.12 May 2026, 6:30 pm

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.12 May 2026, 6:30 pm - 7 minutes 12 seconds"What I did in the hedonium shockwave, by Emma, age six and a half" by ozymandiasMy name is Emma and I’m six and a half years old and I like pink and Pokemon and my cat River and I’m going to be swallowed by a hedonium shockwave soon, except you already know that about me because everyone else is too.

“Hedonium shockwave” means that everyone is going to be happy forever. Not just all the humans but all the animals and the flowers and the ground and River too. It has already made a bunch of the stars happy, like Betelgeuse and Alpha Centauri.

Scientists saw that the stars were blinking out, and they did a lot of very hard science and figured out that the stars were turning into happiness. I wanted to be a scientist when I grew up but I won’t be a scientist because instead I’m going to be happy forever.

I used to have a hard time saying “hedonium shockwave” but grownups keep saying it so I’ve gotten a lot of practice. Sometimes it seems like all grownups do, in real life and on the TV, is say “hedonium shockwave” at each other until they all start crying.

I looked at the sky to see if I could see [...]

---

First published:

April 13th, 2026

Source:

https://www.lesswrong.com/posts/rgXQuG8KXtxugSG6H/what-i-did-in-the-hedonium-shockwave-by-emma-age-six-and-a

---

Narrated by TYPE III AUDIO.11 May 2026, 6:30 am - 5 minutes 35 seconds"Bad Problems Don’t Stop Being Bad Because Somebody’s Wrong About Fault Analysis" by LinchHere's a dynamic I’ve seen at least a dozen times:

Alice: Man that article has a very inaccurate/misleading/horrifying headline.

Bob: Did you know, *actually* article writers don't write their own headlines?

…

But what I care about is the misleading headline, not your org chart

__

Another example I’ve encountered recently is (anonymizing) when a friend complained about a prosaic safety problem at a major AI company that went unfixed for multiple months. Someone else with background information “usefully” chimed in with a long explanation of organizational limitations and why the team responsible for fixing the problem had limitations on resources like senior employees and compute, and actually not fixing the problem was the correct priority for them etc etc etc.

But what I (and my friend) cared about was the prosaic safety problem not being fixed! And what this says about the company's ability to proactively respond to and fix future problems. We’re complaining about your company overall. Your internal team management was never a serious concern for us to begin with!

__

A third example comes from Kelsey Piper.

Kelsey wrote about the (horrifying) recent case where Hantavirus carriers in the recent [...]

The original text contained 1 footnote which was omitted from this narration.

---

First published:

May 8th, 2026

Source:

https://www.lesswrong.com/posts/PCsmhN9z65HtC4t5v/bad-problems-don-t-stop-being-bad-because-somebody-s-wrong

---

Narrated by TYPE III AUDIO.10 May 2026, 7:45 am - More Episodes? Get the App